Looking at the kubeadm types definition I found this nice description that clearly explains it:ĬontrolPlaneEndpoint sets a stable IP address or DNS name for the control plane itĬan be a valid IP address or a RFC-1123 DNS subdomain, both with optional TCP port. Is there any such plugin or other way of configuring this behaviour to avoid separate infrastructure for load balancing the API server? So couldn't masters ( -control-plane) join the cluster and use the role label to discover the other control plane nodes? Marking the node as control-plane by adding the taints Marking the node as control-plane by adding the label "/master=''" This is because the mark-control-plane phase does: without -control-plane), then it is not only aware of other nodes in the cluster, but also which ones are masters. * The cluster has a stable controlPlaneEndpoint address.īut if you instead join a worker node (i.e. Unable to add a new control plane instance a cluster that doesn't have a stable controlPlaneEndpoint address One or more conditions for hosting a new control plane instance is not satisfied. See the Kubernetes docs for more details.It is not possible to join master nodes without having set a controlPlaneEndpoint: If two or more are marked as default, each PersistentVolumeClaim must explicitly Note: At most one storage class should be marked as default. To provision PersistentVolumes, we have to ensure that theĭesired storage classes have been created in the cluster. SSDs, along with network backed storage such as NFS, iSCSI, and CephFS. Typically include volume types for mechanical drives and The volume classes are extensive and vary by cloud provider, but they The cluster with shared storage, and/or volumes for Pods. Kubernetes storage provides data persistence for

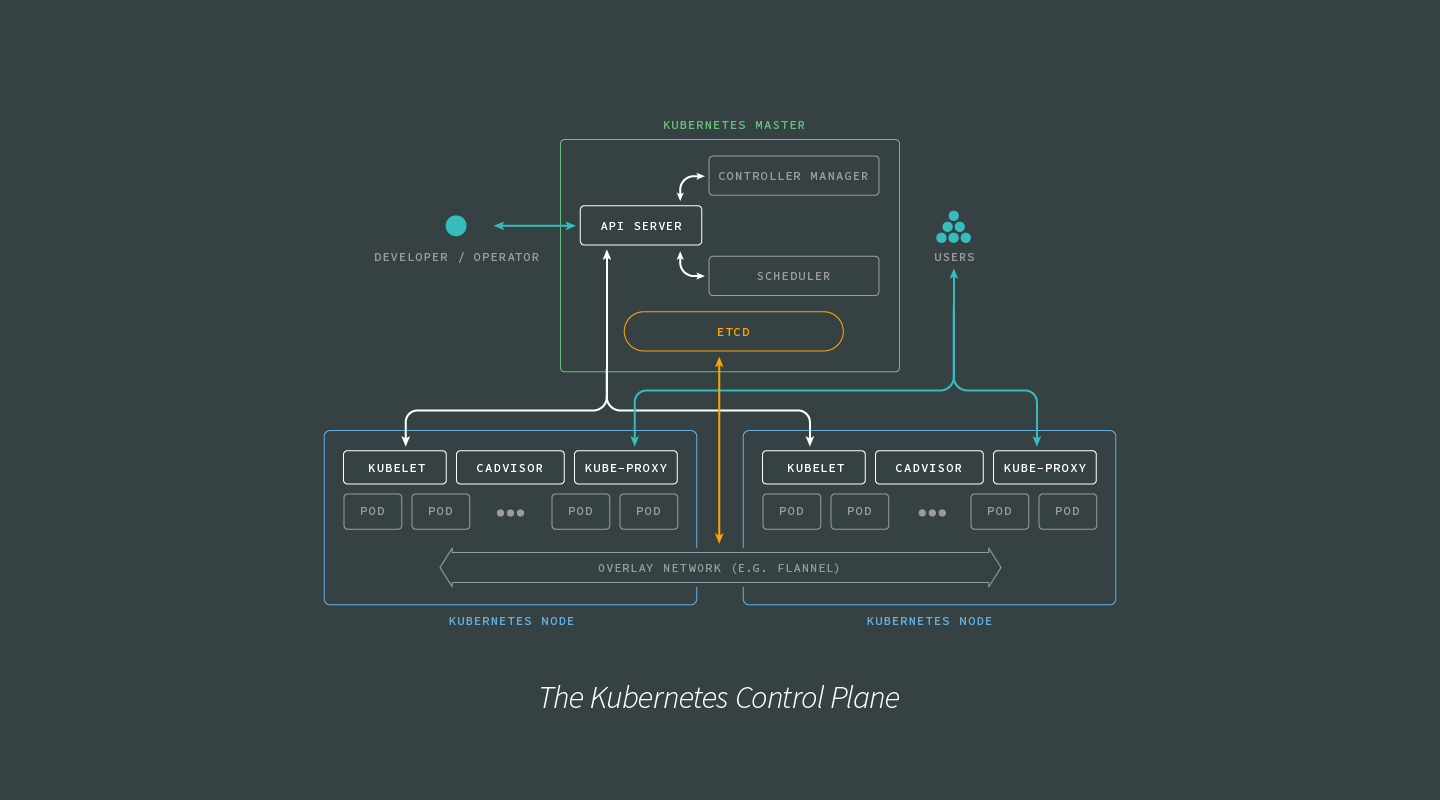

Network ( projectName, ) export const subnetworkName = subnet. Provisioned with pulumi/eks and is configurable.Ĭonst network = new gcp. This plugin is deployed byĭefault on worker nodes as a DaemonSet named aws-node in all clusters Workers without associating a public IP address is highly recommended - itĬreates workers that will not be publicly accessible from the Internet,Īnd they’ll typically be shielded within your VPC.ĮKS will automatically manage Kubernetes Pod networking through If no private subnets are specified, workers will be deployed into the public If some need to be removed, the change is accomplished with a Pulumi update.īy default, pulumi/eks will deploy workers into the private subnets, if If these need to be updated to include more subnets, or Intend to use into the cluster definition. To ensure proper function, pass in all public and/or private subnets you In order to determine which subnets it can provision load balancers in. Kubernetes requires that all subnets be properly tagged, Private subnets for use as the default subnets for workers to run in.Private subnets for provisioning private load balancers.Public subnets for provisioning public load balancers.Typical setups will provide Kubernetes with the following resources How you create the network will vary on your permissions and preferences. Of API requests originating from a certain group, and can also help scope Limit the scope of damage if a given group is compromised, can regulate the number Separation of identities is important for several reasons: it can be used to You’ll want to create the Identity stack first. In Identity we demonstrate how to create typical IAM resources for use in Kubernetes. Recommended Settings: To apply helpful featuresĪnd best-practices, such as version pinning, resource tags, and control plane logging.Storage: To provide data stores for the cluster and its.Managed Infrastructure: To provide managed services for the cluster.Īt a minimum, this includes a virtual network for the cluster.Identity: For authentication and authorization of.Scheduling decisions to facilitate the applications and cloud workflows that Manage the cluster’s state, segmented by responsibilities. The control plane is a collection of processes that coordinate and The full code for this stack is on GitHub. See the official Kubernetes docs for more details. Their managed offering, Google Kubernetes Engine (GKE), offers an While it is possible to provision and manage a cluster manually on Google Cloud,

In order to run container workloads, you will need a Kubernetes cluster.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed